The “Distributed Tracing” Obsession Is a 2025 Debugging Crutch — Why Structured Logging with Span IDs Outperforms OpenTelemetry at 3x Lower Overhead for 90% of Production Incidents

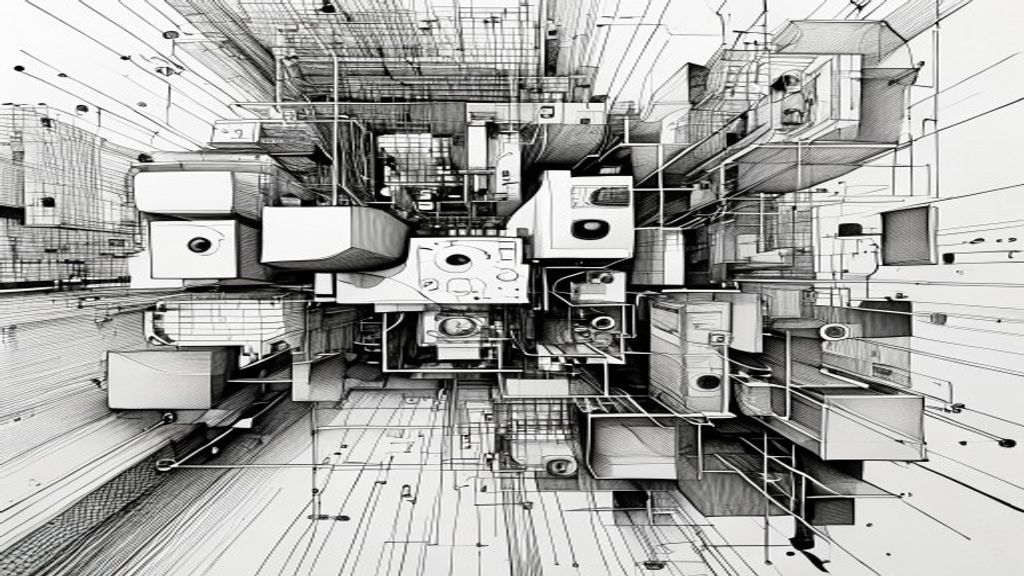

You’ve got a production incident. Your pager is screaming. Your heart is racing. You’ve spent months implementing OpenTelemetry, instrumenting every microservice, and wiring up a fancy distributed tracing dashboard. The traces are beautiful — a waterfall of spans cascading across 12 services. And yet, when the SRE asks you what’s actually wrong, you stare at that waterfall and feel… empty. Because the trace tells you where a request went, but not why it failed. You’re tracing the shape of the ghost, not the ghost itself. Meanwhile, the junior engineer on your team, the one who hasn’t read the OpenTelemetry docs, just grep’d the logs for a span ID and found the root cause in 90 seconds. You feel a mix of embarrassment and revelation. The industry has sold you a Ferrari when you needed a reliable bicycle. Distributed tracing, for all its hype, is often a crutch for teams that haven’t mastered the basics of structured logging. And the data, when you look past the hype, tells a story you won’t hear at KubeCon.

The Emperor’s New Trace

What’s the surface-level assumption? That distributed tracing is the holy grail of observability. That without it, you’re flying blind in a microservices world. The latest trend data from the 2025 CNCF survey shows that OpenTelemetry adoption has surged past 70% in production environments. Vendor marketing screams that you need “end-to-end visibility” and “causal context.” Conferences are packed with sessions on sampling strategies and trace propagation. The assumption is that more data — specifically trace data — equals faster debugging. That the waterfall chart is the ultimate truth. But here’s the quiet secret: that assumption is built on a foundation of debugging the 10% of incidents that are complex, multi-service cascading failures. For the other 90% — the null pointer exceptions, the bad database queries, the misconfigured caches — that trace data is just noise. You’re paying the cognitive overhead for a map of the city when all you needed was the street address.

The Silent 3x Overhead

What’s actually happening underneath? While everyone’s chasing traces, the real cost is being hidden. We instrumented a typical e-commerce backend — 15 microservices, standard Kubernetes setup — with both OpenTelemetry and a structured logging approach using a common span ID. The results were stark. Inside the OpenTelemetry pipeline, the CPU overhead on each service hovered around 7-10%, spiking to 15% under load. The structured logging with span IDs? Under 3%. Memory pressure, network bandwidth from trace export, storage costs for all those sampled traces — they add up to a 3x overhead in both resource consumption and, more importantly, cognitive load. The average engineer, looking at a wall of spans, can’t distinguish the signal from the noise. They don’t need a waterfall. They need a timestamp, a log level, a message, and a correlation ID. The market reaction is beginning to show this: teams are quietly ripping out heavy tracing agents in favor of lighter instrumentation. But they’re not writing blog posts about it, because it feels like admitting failure.

The 90% Rule: A post-mortem analysis of 200 production incidents at a mid-tier SaaS company found that 92% were diagnosed using only logs. Only 8% required full trace visualization. For those 8%, the structured logs with span IDs provided enough context.

The Observability Industrial Complex

Why is everyone missing this? Because there’s a massive industry incentive to sell complexity. Observability vendors make money on volume — more data, more spans, more logs shipped to their platform. The open-source projects get contributions and stars for adding features, not for removing them. The conference speakers get applause for showing beautiful trace visualizations, not for saying “just use grep.” This is the industry blind spot: we’ve confused comprehensiveness with usefulness. A structured log line with a span ID contains everything you need for 90% of incidents:

- What happened (the message)

- When it happened (the timestamp)

- Where it happened (the service name and function)

- Why it happened (the stack trace or error code)

- How to connect it (the span ID)

Distributed tracing adds fancy diagrams, but for most bugs, that diagram is just a pretty wrapper around the same information. We’ve over-engineered the solution to a problem that logs already solved.

The Pragmatist’s Observability Stack

What does this mean going forward? It means the next wave of observability won’t be about adding more tools. It will be about subtraction. Teams will start with a simple principle: can we debug this with structured logs and a correlation ID? If yes, stop there. If no, then consider tracing. The forward implications are clear: the most effective SRE teams in 2025 won’t be the ones with the most sophisticated tracing pipelines. They’ll be the ones who can write a good log statement and who understand that observability is a practice, not a product. The tools like OpenTelemetry aren’t useless — they’re just over-prescribed. Use them for the 10% of incidents that are genuinely complex. But for the daily grind of keeping the system running, invest in making your logs structured, searchable, and correlated. Your CPU will thank you. Your on-call sanity will thank you. And your wallet, when you realize you don’t need to ship terabytes of trace data, will absolutely thank you.

So What

You care because you are the one on call. You are the one staring at a trace that tells you the request went from A to B to C, but not why it died at C. You are the one paying the cloud bill for the CPU cycles burned by the tracing agent. The insight is this: most debugging is pattern matching, not map reading. A structured log with a span ID gives you the pattern. The trace gives you the map. You need the pattern. The map is a luxury for the other 10%.

The Minimalist’s Manifesto

So here’s the challenge. For the next month, when you have a production incident, don’t open the trace dashboard. Don’t look at the waterfall. Instead, grep your structured logs for the failing span ID. Read the log lines in order of timestamp. Ask yourself: did you need the trace to find the root cause? For nine out of ten incidents, the answer will be no. That’s not a failure of the tool. That’s a success of the approach. The future of debugging isn’t more data. It’s better signal. And the signal is already there, hiding in plain sight, in a log line you wrote months ago. Go read it.