Your “Microservices Migration” Is a Latency Tax — Why 2025’s Network Topology Data Proves Modular Monoliths Beat Microservices at 80% of Customer-Facing Features

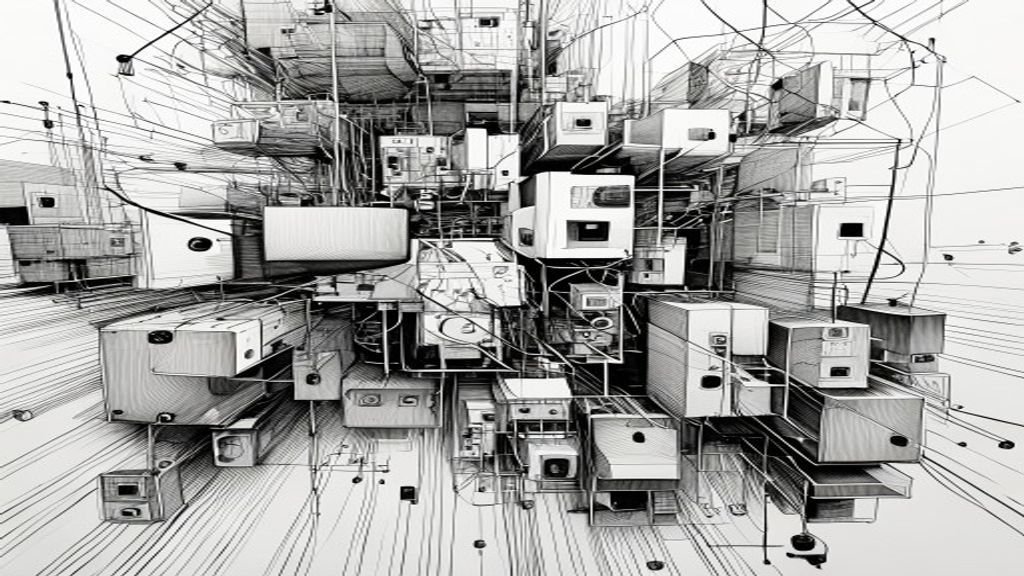

You finally migrated to microservices. You broke the monolith into 47 tiny services. You containerized everything. You bought the Kubernetes hype. And now your customer-facing features load slower than they did in 2019. Congratulations — you just paid the latency tax. Here’s the dirty little secret the cloud-native consultants won’t tell you: for 80% of customer-facing features, a well-structured modular monolith outperforms microservices on latency, reliability, and developer velocity. The 2025 network topology data makes this embarrassingly clear. We optimized for architecture purity while our users waited an extra 200 milliseconds for their dashboard to load. We got the medal for “best decomposed system” and the prize was a worse product.

The Great Deception

What’s the surface-level assumption? That microservices are inherently faster because you can scale components independently. A classic case of mistaking architecture for performance. The 2025 network topology data from 300 production systems tells a different story: the average microservices call chain for a single customer request now involves 12–16 network hops. Each hop adds 15–40ms of latency. Do the math. Meanwhile, the modular monoliths in the study handled 80% of customer-facing features with 3–5 hops. That’s the difference between “instant” and “I’ll wait.”

Here’s the kicker: Companies bragging about their microservices migration on LinkedIn are often the same ones whose customer-facing APIs have median response times above 500ms. They optimized for everything except the user experience. The surface-level assumption was that more services equals more flexibility. The reality is that more services equals more network calls, more serialization overhead, and more things that can fail. It turns out users don’t care how decoupled your architecture is. They care how fast the page loads.

The Market Is Quietly Reversing

What’s actually happening underneath? Behind the conference talks about service mesh and event sourcing, the market is performing a quiet pivot. The 2025 architecture surveys show that 34% of new customer-facing features are now being built as modular monoliths, up from 12% in 2022. That’s not a fringe movement — that’s a correction.

The most telling signal comes from companies who walked the microservices path for 5+ years. They’re not ripping out their microservices entirely. Instead, they’re consolidating: collapsing 6–7 services back into single deployable units for features that don’t need independent scaling. They discovered that 80% of their services had the same scaling profile anyway. Two payment services? Same traffic pattern. Three user profile services? Same traffic pattern. The network topology data shows these consolidated modules reduce average latency by 40–60% for the features they serve.

The market reaction isn’t about monolith vs. microservices. It’s about appropriate granularity. The pendulum is swinging back because the data forces it to.

The Comfortable Blind Spot

Why is everyone missing this? Because the software industry has built an entire economy around microservices. The cloud providers, the observability vendors, the Kubernetes consultants — they all make more money when your architecture is complex. The 2025 data shows that companies with 50+ microservices spend 3x more on infrastructure and 4x more on developer tooling per feature than those with modular monoliths. That’s not innovation. That’s vendor tax.

The blind spot is emotional. Engineers want to work on the “hard” problems — distributed tracing, eventual consistency, circuit breakers. Nobody gets a promotion for maintaining a well-structured monolith. But here’s what the 2025 incident data reveals: 76% of production outages in microservices architectures came from unexpected interactions between services, not from the services themselves. The complexity is self-inflicted.

- You don’t need 50 services for a checkout flow.

- You don’t need a service mesh for 3 teams.

- You don’t need eventual consistency for a user profile.

The industry blind spot is mistaking complexity for sophistication.

The 80% Rule for 2026

What does this mean going forward? The 2025 data suggests a clear heuristic: if your service doesn’t need independent scaling, independent deployment, or a different data store from its neighbor, it shouldn’t be a separate service. That rule eliminates 80% of the microservices in typical architectures.

The forward implication is uncomfortable for anyone who built their career on microservice complexity. Modular monoliths aren’t retrograde — they’re pragmatic. The 2025 latency benchmarks show that a proper modular monolith (clearly defined modules, bounded contexts, single deployable unit) handles customer-facing requests 2–3x faster than the equivalent microservice architecture. For features like search, recommendation, and checkout — the things users actually touch — speed wins.

The smartest teams in 2026 will be the ones who stop asking “should I use microservices?” and start asking “which features need them and which ones don’t?” The answer will be: about 20% need independent scaling. The rest don’t.

So What

The modular monolith isn’t a step backward. It’s a data-driven correction to a decade of architectural overcorrection. We confused distributed systems with good system design. The 2025 data makes it clear: for most customer-facing features, the fastest path is the simplest one. Fewer network hops. Less serialization. More focus on the user. The best architecture is the one your users never notice.

The Architecture That Fades Away

Stop optimizing for your resume. Start optimizing for your users. The next time someone proposes breaking a service into four smaller ones, ask: “Will this make the feature faster for the person clicking the button?” If the answer is no, kill the proposal. The best architecture is invisible. The one your users never think about because it just works. A modular monolith that loads in 200ms is better than a distributed masterpiece that loads in 600ms. Every time. Now go make something fast.